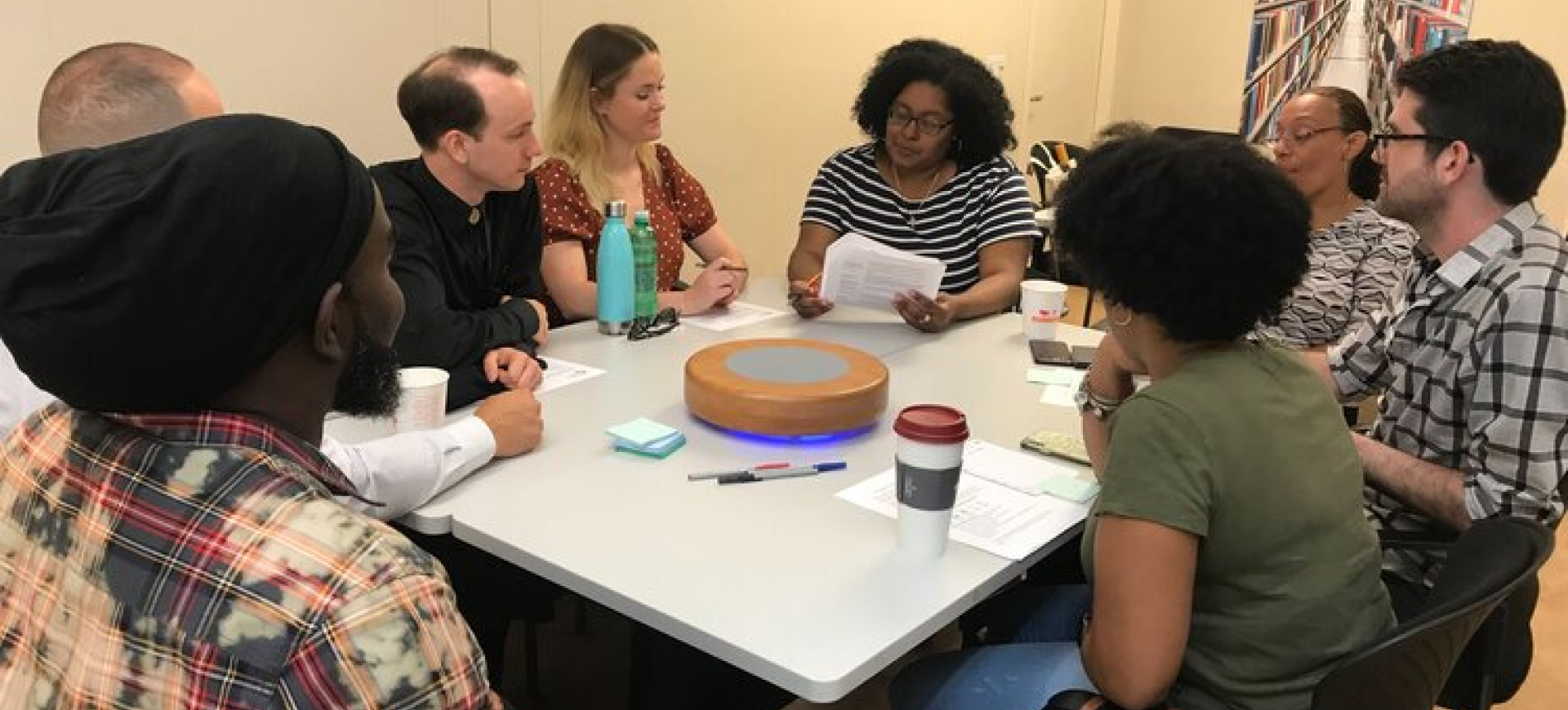

Imagine you’re hosting a Zoom call. It’s a conversation you’re facilitating between some members of your neighborhood, talking about what’s important to you. Everyone’s here! Better start the recording so you don’t forget. “Can you all see my screen?” The catchphrase of 2020, still going strong. Everything goes great; Joey talks about growing up in the 80s, Jordan told a story about her mom, and at the very end Jamie stayed on to tell you about their favorite peach pie recipe. Whoops, are you still recording? It’s alright, let’s look at a new tool Cortico has in the works that can help edit your new audio recording.

It’s not every day that you get to upload a conversation to the Local Voices Network, and when you do, I think it’s fair to assume that you’d want that conversation to be polished up a bit — slice off the parts of the audio that include some “pandemic-isms” (eg. “Can everyone hear me?”, “Ope, you’re still on mute, Greg.”, etc.) or maybe the very end when everyone has turned to the weather. At Cortico, this is known as a trim, and it has historically been a task for our steadfast Partner Support Lead, Kelly, that has taken up a non-negligible amount of time. So what follows is some of the work done this summer to take this process off the plate of Cortico staff and into the hands of Cortico partners, allowing them more control over their own conversations, to make trims on their own time.

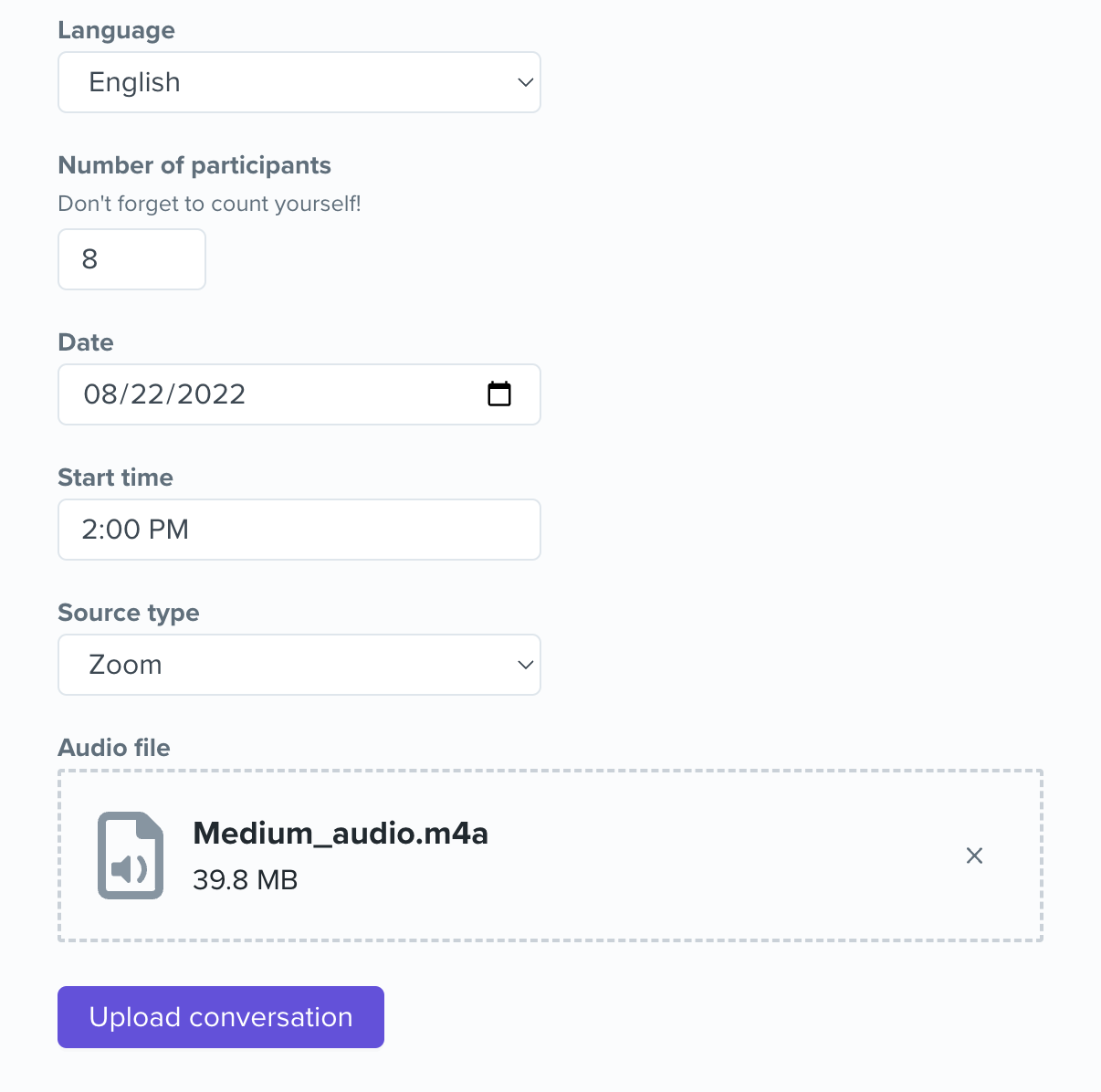

First, let’s briefly walk through the current process of making a trim.

As you can see… not very efficient! So let’s see how things changed.

As described above, all trims were done by request behind the scenes. So to implement a new trimming feature, a few questions had to be answered first:

The first two questions are easier to answer. Eventually we want whoever is uploading the conversation to be able to trim pre-upload.

The how is the trickier part.

In one word, the answer ended up being.. Wavesurfer.

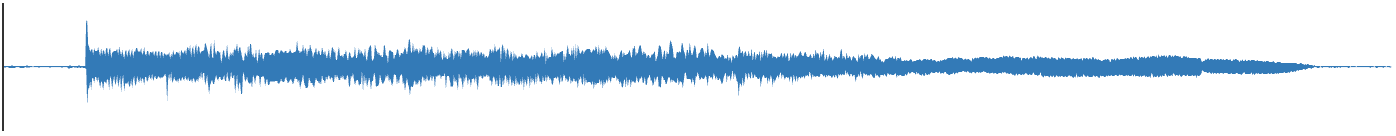

Wavesurfer.js is a versatile React library that displays audio in a more appealing WAV format to a user, or in their words, “a customizable audio waveform visualization, built on top of Web Audio API and HTML5 Canvas”, and it looks like this:

The process of setting up a barebones Wave is simple enough; create a <div> using HTML or JSX, instantiate a new instance of Wave using the included `create` method, then load your audio URL using the `load` method. On the simplest of web pages, this should be all that’s required to make the Wave appear!

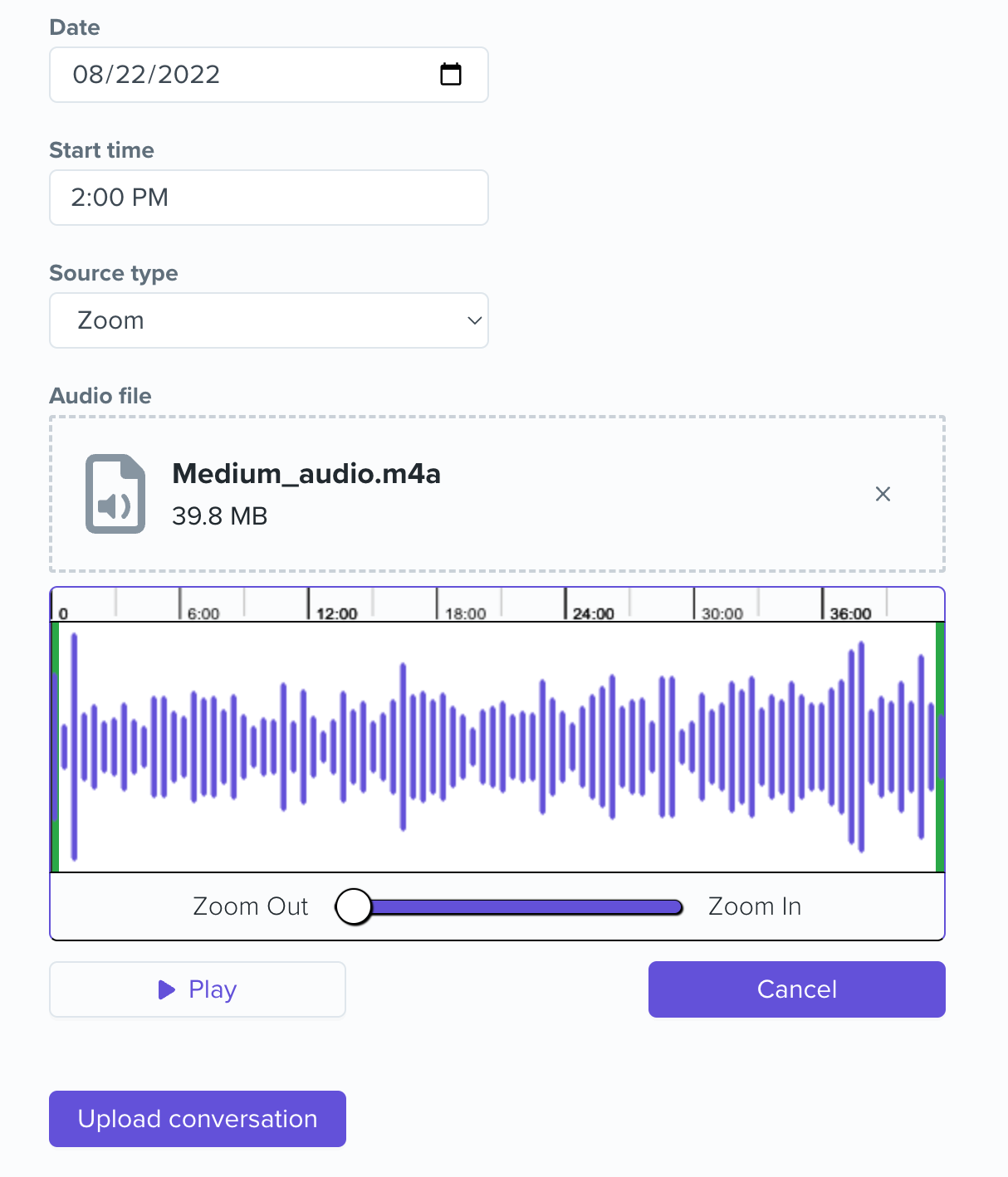

Of course, we wanted something a bit more exciting, and this is what we’ve ended up with:

Apart from some useful additions like the timeline that sits on top of the Wave and the Play/Cancel buttons that are connected to the Wave, there are three aspects of building this trimming feature that I want to dive a little deeper on:

The Wave is visually composed of anywhere from dozens to hundreds of small vertical lines depending on how long the audio is, and when you combine that with the re-rendering habits of React, you could guess that there might be some performance issues when zooming in and out of the Wave.

The workaround here was using a debounce method. There are other blog posts entirely about how to use a debounce function, but basically we told the Wave to only re-render after 100 milliseconds. This effectively stopped the lag a user would experience when trying to drag the zoom slider and instead replaced it with a very short delay, a much better user experience.

The region that overlays the Wave is the main tool that a user will be using to successfully make a trim. We modeled the functionality after QuickTime Player in the way that the user will only ever hear what is selected within the region. However, getting the design of the wave overlay the way we wanted was trickier than expected.

By default, the region will rest over top of the Wave, typically creating an opaque layer that the user can slide and move around. But we really wanted the opposite effect — to make it look like the Wave was “closest” to the user and only have the region overlay appear when the user clicks and drags the large, green region handles on each end.

This was done by managing the z-indices of all elements in play; the region technically still rests over top of the Wave container, but it’s a white background, creating the illusion that the Wave is still on top because we changed the z-index of the vertical lines to rest over top of the region. The Wave itself has a grey, opaque color which is why it looks like you’re “creating” a new region when dragging the handle, when what you’re really doing is revealing the Wave underneath. A cool bit of sleight of hand here if you ask me.

Lastly, what happens when the user clicks the Submit button? How do the trim times that the user selects by dragging the region handles end up as a permanent trim?

The Wave ends up being part of the form that the user submits, the form that extracts the information that a user inputs. By passing down a function from this form to the Wave component, we can pass up the locations of the region ends that correspond to where the user would like to make a trim to the form, which ultimately gets passed to our backend where the trim gets made.

As of August 2022, this feature is going through a soft launch so you may not be seeing it yet, but work has already begun on the next feature that our partners will be able to use which will be allowing partners to make redactions on conversations that have already been uploaded. Because one benefit of having conversations locally facilitated by Cortico’s partners is that the participants of those conversations frequently feel comfortable enough to share anything from their honest opinions to their life stories. But one unintended consequence of participants feeling so relaxed is that occasionally someone will say something they wish they hadn’t (e.g. the secret ingredient to your mother-in-law’s famous cookie recipe). So keep an eye out for that and thank Kelly for making these edits in the meantime!